-

Latest evacuee from hantavirus-hit cruise lands in Europe

Latest evacuee from hantavirus-hit cruise lands in Europe

-

Rubio meets US pope in bid to ease tensions

-

Women linked to IS fighters return to Australia from Middle East

Women linked to IS fighters return to Australia from Middle East

-

Shell profit jumps as Mideast war fuels oil prices

-

Oil sinks, Tokyo leads Asia stock surge on growing Mideast peace hopes

Oil sinks, Tokyo leads Asia stock surge on growing Mideast peace hopes

-

India vows to crush terror 'ecosystem', a year after Pakistan conflict

-

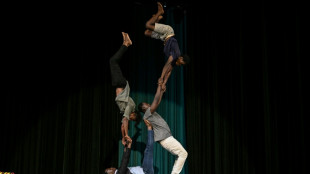

Circus tackles jihadist nightmares of Burkina Faso's children

Circus tackles jihadist nightmares of Burkina Faso's children

-

Iran denies ship attack as Trump warns of renewed bombing, eyes deal

-

Badminton looks to future with 'evolution and innovation'

Badminton looks to future with 'evolution and innovation'

-

Troubled waters: Jakarta battles deadly, invasive suckerfish

-

Senegal's children mourn in silence when migrant parents disappear

Senegal's children mourn in silence when migrant parents disappear

-

EU weighs options as summer jet fuel threat looms

-

Spurs thrash Timberwolves as Knicks edge Sixers in NBA playoffs

Spurs thrash Timberwolves as Knicks edge Sixers in NBA playoffs

-

Australia to force gas giants to reserve fuel for domestic use

-

AirAsia signs $19bn deal for 150 Airbus A220 jets

AirAsia signs $19bn deal for 150 Airbus A220 jets

-

Japan fires missiles during drills, drawing China rebuke

-

Toluca rout Son's LAFC to set up all-Mexican CONCACAF final

Toluca rout Son's LAFC to set up all-Mexican CONCACAF final

-

Vingegaard begins bid for Giro-Tour double with Pellizzari boosting home hopes

-

Roma's Champions League return back on as Milan, Juve wobble

Roma's Champions League return back on as Milan, Juve wobble

-

Tokyo leads Asia stock surge on growing Mideast peace hopes

-

Australia cricket great Warner to 'accept' drink-drive charge: lawyer

Australia cricket great Warner to 'accept' drink-drive charge: lawyer

-

Brunson steers Knicks to 2-0 lead with tight win over Sixers

-

Rubio seeks to ease tensions with US pope

Rubio seeks to ease tensions with US pope

-

AI disinfo tests South Korean laws ahead of local elections

-

Australian state overturns Melbourne ban on World Cup watch party

Australian state overturns Melbourne ban on World Cup watch party

-

Colombian ex-fisherman swaps trade for saving Caribbean coral

-

Lobito Corridor: Africa's mega-project facing delivery test

Lobito Corridor: Africa's mega-project facing delivery test

-

Africa's Lobito Corridor chief tells AFP business, not geopolitics, drives strategy

-

Trump to host Lula in test of fitful relationship

Trump to host Lula in test of fitful relationship

-

K-pop stars BTS draw 50,000-strong crowd in Mexico

-

Britons set to punish Starmer's Labour in local polls

Britons set to punish Starmer's Labour in local polls

-

Wars in Middle East, backyard loom over ASEAN summit

-

US court releases purported Epstein suicide note

US court releases purported Epstein suicide note

-

Israeli court rejects flotilla activists' appeal challenging detention

-

Victim's lawyer alleges Boeing was 'negligent' in 2019 Ethiopian crash

Victim's lawyer alleges Boeing was 'negligent' in 2019 Ethiopian crash

-

Williamson named in New Zealand squad for Ireland, England Tests

-

PSG add muscle to magic as another Champions League final beckons

PSG add muscle to magic as another Champions League final beckons

-

Tigers' pitcher Valdez suspended for hitting opponent

-

Trump says Iran deal 'very possible' but threatens strikes if talks fail

Trump says Iran deal 'very possible' but threatens strikes if talks fail

-

Musk's SpaceX strikes data center deal with Anthropic

-

Bayern lament lack of 'killer' instinct after PSG elimination

Bayern lament lack of 'killer' instinct after PSG elimination

-

Virus-hit cruise ship heads for Spain as evacuees land in Europe

-

Holders PSG edge Bayern Munich to reach Champions League final

Holders PSG edge Bayern Munich to reach Champions League final

-

Russia warns diplomats in Kyiv to evacuate in case of strike

-

Hantavirus ship passenger: 'They didn't take it seriously enough'

Hantavirus ship passenger: 'They didn't take it seriously enough'

-

First hantavirus infection could not have been during cruise: WHO expert

-

Kentucky Derby-winner Golden Tempo to skip Preakness Stakes

Kentucky Derby-winner Golden Tempo to skip Preakness Stakes

-

Trump says Iran deal 'very possible', but threatens strikes if not

-

Lula heads to Washington to meet Trump in fraught election year

Lula heads to Washington to meet Trump in fraught election year

-

No timeline for injury return for 'frustrated' Doncic

Generative AI's most prominent skeptic doubles down

Two and a half years since ChatGPT rocked the world, scientist and writer Gary Marcus still remains generative artificial intelligence's great skeptic, playing a counter-narrative to Silicon Valley's AI true believers.

Marcus became a prominent figure of the AI revolution in 2023, when he sat beside OpenAI chief Sam Altman at a Senate hearing in Washington as both men urged politicians to take the technology seriously and consider regulation.

Much has changed since then. Altman has abandoned his calls for caution, instead teaming up with Japan's SoftBank and funds in the Middle East to propel his company to sky-high valuations as he tries to make ChatGPT the next era-defining tech behemoth.

"Sam's not getting money anymore from the Silicon Valley establishment," and his seeking funding from abroad is a sign of "desperation," Marcus told AFP on the sidelines of the Web Summit in Vancouver, Canada.

Marcus's criticism centers on a fundamental belief: generative AI, the predictive technology that churns out seemingly human-level content, is simply too flawed to be transformative.

The large language models (LLMs) that power these capabilities are inherently broken, he argues, and will never deliver on Silicon Valley's grand promises.

"I'm skeptical of AI as it is currently practiced," he said. "I think AI could have tremendous value, but LLMs are not the way there. And I think the companies running it are not mostly the best people in the world."

His skepticism stands in stark contrast to the prevailing mood at the Web Summit, where most conversations among 15,000 attendees focused on generative AI's seemingly infinite promise.

Many believe humanity stands on the cusp of achieving super intelligence or artificial general intelligence (AGI) technology that could match and even surpass human capability.

That optimism has driven OpenAI's valuation to $300 billion, unprecedented levels for a startup, with billionaire Elon Musk's xAI racing to keep pace.

Yet for all the hype, the practical gains remain limited.

The technology excels mainly at coding assistance for programmers and text generation for office work. AI-created images, while often entertaining, serve primarily as memes or deepfakes, offering little obvious benefit to society or business.

Marcus, a longtime New York University professor, champions a fundamentally different approach to building AI -- one he believes might actually achieve human-level intelligence in ways that current generative AI never will.

"One consequence of going all-in on LLMs is that any alternative approach that might be better gets starved out," he explained.

This tunnel vision will "cause a delay in getting to AI that can help us beyond just coding -- a waste of resources."

- 'Right answers matter' -

Instead, Marcus advocates for neurosymbolic AI, an approach that attempts to rebuild human logic artificially rather than simply training computer models on vast datasets, as is done with ChatGPT and similar products like Google's Gemini or Anthropic's Claude.

He dismisses fears that generative AI will eliminate white-collar jobs, citing a simple reality: "There are too many white-collar jobs where getting the right answer actually matters."

This points to AI's most persistent problem: hallucinations, the technology's well-documented tendency to produce confident-sounding mistakes.

Even AI's strongest advocates acknowledge this flaw may be impossible to eliminate.

Marcus recalls a telling exchange from 2023 with LinkedIn founder Reid Hoffman, a Silicon Valley heavyweight: "He bet me any amount of money that hallucinations would go away in three months. I offered him $100,000 and he wouldn't take the bet."

Looking ahead, Marcus warns of a darker consequence once investors realize generative AI's limitations. Companies like OpenAI will inevitably monetize their most valuable asset: user data.

"The people who put in all this money will want their returns, and I think that's leading them toward surveillance," he said, pointing to Orwellian risks for society.

"They have all this private data, so they can sell that as a consolation prize."

Marcus acknowledges that generative AI will find useful applications in areas where occasional errors don't matter much.

"They're very useful for auto-complete on steroids: coding, brainstorming, and stuff like that," he said.

"But nobody's going to make much money off it because they're expensive to run, and everybody has the same product."

P.Anderson--BTB