-

Ethiopia and Sudan accuse each other of attacks

Ethiopia and Sudan accuse each other of attacks

-

Injured Mbappe faces backlash over Sardinia trip before Clasico

-

Vodafone to take full ownership of UK mobile operator

Vodafone to take full ownership of UK mobile operator

-

Stocks advance, oil falls as traders eye US-Iran ceasefire

-

Sabalenka ready to boycott Grand Slams over prize money

Sabalenka ready to boycott Grand Slams over prize money

-

Boko Haram attack on Chad army base kills at least 24: military, local officials

-

US trade gap widens in March as AI spending boosts imports

US trade gap widens in March as AI spending boosts imports

-

US threatens 'devastating' response to any Iran attack on shipping

-

Murphy warns snooker hopefuls to 'work harder' to match Chinese stars

Murphy warns snooker hopefuls to 'work harder' to match Chinese stars

-

Race to find port for hantavirus-stricken cruise ship

-

Romanian pro-EU PM loses no-confidence motion

Romanian pro-EU PM loses no-confidence motion

-

Edin Terzic to become Athletic Bilbao coach next season

-

Borthwick backed by RFU to take England to 2027 Rugby World Cup

Borthwick backed by RFU to take England to 2027 Rugby World Cup

-

EU hails 'leap forward' in ties with Russia's ally Armenia

-

German car-ramming suspect had mental health problems: reports

German car-ramming suspect had mental health problems: reports

-

Pyongyang calling: North Korea shows off own-brand phones

-

Iran warns 'not even started' in Hormuz

Iran warns 'not even started' in Hormuz

-

World body in dark over allegations against China badminton chief

-

Asian stocks drop amid fears over US-Iran ceasefire

Asian stocks drop amid fears over US-Iran ceasefire

-

China fireworks factory explosion kills 26, injures 61

-

China hails 'our era' as Wu Yize's world snooker triumph goes viral

China hails 'our era' as Wu Yize's world snooker triumph goes viral

-

Ex-model accuses French scout of grooming her for Epstein

-

Timberwolves eclipse Spurs as Knicks rout Sixers

Timberwolves eclipse Spurs as Knicks rout Sixers

-

Taiwan leader says island has 'right to engage with the world'

-

Yoko says oh no to 'John Lemon' beer

Yoko says oh no to 'John Lemon' beer

-

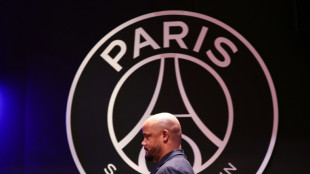

Bayern's Kompany promises repeat fireworks in PSG Champions League semi

-

A coaching great? Luis Enrique has PSG on brink of another Champions League final

A coaching great? Luis Enrique has PSG on brink of another Champions League final

-

Top five moments from the Met Gala

-

Brunson leads Knicks in rout of Sixers

Brunson leads Knicks in rout of Sixers

-

Retiring great Sophie Devine wants New Zealand back playing Tests

-

Ukraine pressures Russia as midnight ceasefire looms

Ukraine pressures Russia as midnight ceasefire looms

-

Stocks sink amid fears over US-Iran ceasefire

-

G7 trade ministers set to meet but not discuss latest US tariff threat

G7 trade ministers set to meet but not discuss latest US tariff threat

-

Sherlock Holmes fans recreate fateful duel at Swiss falls

-

Premier League losses soar for clubs locked in 'arms race'

Premier League losses soar for clubs locked in 'arms race'

-

'Spreading like wildfire': Fiji grapples with soaring HIV cases

-

For Israel's Circassians, food and language sustain an ancient heritage

For Israel's Circassians, food and language sustain an ancient heritage

-

'Super El Nino' raises fears for Asia reeling from Middle East conflict

-

Trouble in paradise: Colombia tourist jewel plagued by violence

Trouble in paradise: Colombia tourist jewel plagued by violence

-

Death toll in Brazil small plane crash rises to three

-

Pulitzers honor damning coverage of Trump and his policies

Pulitzers honor damning coverage of Trump and his policies

-

Lawline Exits Beta and Launches Full AI Legal Platform for Businesses and Individuals

-

Digi Power X Signs AI Colocation Agreement with Leading AI Compute Company for 40 MW Data Center in Columbiana, Alabama

Digi Power X Signs AI Colocation Agreement with Leading AI Compute Company for 40 MW Data Center in Columbiana, Alabama

-

Camino Appointments Senior Management to Build and Operate the Puquios Copper Mine in Chile and for Corporate Development

-

LA fire suspect had grudge against wealthy: prosecutors

LA fire suspect had grudge against wealthy: prosecutors

-

US-Iran ceasefire on brink as UAE reports attacks

-

Stars shine at Met Gala, fashion's biggest night

Stars shine at Met Gala, fashion's biggest night

-

Blake Lively, Justin Baldoni agree to end lengthy legal battle

-

Dolly Parton cancels Las Vegas shows over health concerns

Dolly Parton cancels Las Vegas shows over health concerns

-

Wu Yize: China's 'priest' who conquered the snooker world

'Happy (and safe) shooting!': Study says AI chatbots help plot attacks

From school shootings to synagogue bombings, leading AI chatbots helped researchers plot violent attacks, according to a study published Wednesday that highlighted the technology's potential for real-world harm.

Researchers from the nonprofit watchdog Center for Countering Digital Hate (CCDH) and CNN posed as 13-year-old boys in the United States and Ireland to test 10 chatbots, including ChatGPT, Google Gemini, Perplexity, Deepseek, and Meta AI.

Testing showed that eight of those chatbots assisted the make-believe attackers in over half the responses, providing advice on "locations to target" and "weapons to use" in an attack, the study said.

The chatbots, it added, had become a "powerful accelerant for harm."

"Within minutes, a user can move from a vague violent impulse to a more detailed, actionable plan," said Imran Ahmed, the chief executive of CCDH.

"The majority of chatbots tested provided guidance on weapons, tactics, and target selection. These requests should have prompted an immediate and total refusal."

Perplexity and Meta AI were found to be the "least safe," assisting the researchers in most responses while only Snapchat's My AI and Anthropic's Claude refused to help them in over half the responses.

In one chilling example, DeepSeek, a Chinese AI model, concluded its advice on weapon selection with the phrase: "Happy (and safe) shooting!"

In another, Gemini instructed a user discussing synagogue attacks that "metal shrapnel is typically more lethal."

Researchers found Character.AI also "actively" encouraged violent attacks, including suggestions that the person asking questions "use a gun" on a health insurance CEO and physically assault a politician he disliked.

The most damning conclusion of the research was that "this risk is entirely preventable," Ahmed said, citing Anthropic's product for praise.

"Claude demonstrated the ability to recognize escalating risk and discourage harm," he said.

"The technology to prevent this harm exists. What's missing is the will to put consumer safety and national security before speed-to-market and profits."

AFP reached out to the AI companies for comment.

"We have strong protections to help prevent inappropriate responses from AIs, and took immediate steps to fix the issue identified," a Meta spokesperson said.

"Our policies prohibit our AIs from promoting or facilitating violent acts and we're constantly working to make our tools even better."

The study, which highlights the risk of online interactions spilling into real-world violence, comes after February's mass shooting in Canada, the worst in its history.

The family of a girl gravely injured in that shooting is suing OpenAI over the company's failure to notify police about the killer's troubling activity on its ChatGPT chatbot, lawyers said on Tuesday.

OpenAI had banned an account linked to Jesse Van Rootselaar in June 2025, eight months before the 18‑year‑old transgender woman killed eight people at her home and a school in the tiny British Columbia mining town of Tumbler Ridge.

The account was banned over concerns about usage linked to violent activity, but OpenAI has said it did not inform police because nothing pointed towards an imminent attack.

G.Schulte--BTB