-

No.1 Scheffler opens with bogey to fall from share of PGA lead

No.1 Scheffler opens with bogey to fall from share of PGA lead

-

Carrick says Man Utd future to be decided 'pretty soon'

-

'Out of shape' Lukaku named in Belgium World Cup squad

'Out of shape' Lukaku named in Belgium World Cup squad

-

Hearts ready to 'rip up the script' in Celtic title showdown

-

X pledges crackdown on illegal content in UK

X pledges crackdown on illegal content in UK

-

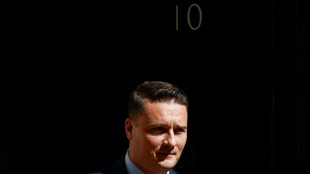

Possible contenders in UK Labour Party leadership race

-

Germany's Merz says wouldn't advise young people to move to US

Germany's Merz says wouldn't advise young people to move to US

-

Israel strikes Lebanon as talks in US enter second day

-

Kyiv in mourning after 24 killed as Ukraine, Russia swap POWs

Kyiv in mourning after 24 killed as Ukraine, Russia swap POWs

-

Beckham becomes first British billionaire sportsman

-

Aussie star, Danish clubbing ode through to Eurovision final

Aussie star, Danish clubbing ode through to Eurovision final

-

German Oscar winner Huller feels war guilt 'every day'

-

Thai lawmakers vote to revive clean air bill

Thai lawmakers vote to revive clean air bill

-

Bayern warn that Canada's Davies struggling to be fit for World Cup

-

Long-serving Coleman to end Everton career at end of season

Long-serving Coleman to end Everton career at end of season

-

Energy-hungry German industries in decline since Ukraine war: data

-

Gordon may have made last Newcastle appearance: Howe

Gordon may have made last Newcastle appearance: Howe

-

Denmark's Queen Margrethe has angioplasty in hospital: palace

-

Civilians caught in war of drones in eastern DR Congo

Civilians caught in war of drones in eastern DR Congo

-

French city reels from teen killing in drug-linked shooting

-

NZ passenger from hantavirus cruise quarantines in Taiwan

NZ passenger from hantavirus cruise quarantines in Taiwan

-

Sci-fi or battlefield reality? Ukraine's bet on drone swarms

-

Russia, Ukraine swap 205 prisoners of war each

Russia, Ukraine swap 205 prisoners of war each

-

Southeast Asia's largest dinosaur identified in Thailand

-

Rapprochement, debates, dissidents: US presidential visits to China

Rapprochement, debates, dissidents: US presidential visits to China

-

Indian magnate Adani agrees multi-million-dollar penalty in US court case

-

Drones to fight school shooters? One US company says yes

Drones to fight school shooters? One US company says yes

-

Mines 'draining Turkey's water sources', environmentalists warn

-

Zimbabwe tobacco hits new highs under smallholder contracts

Zimbabwe tobacco hits new highs under smallholder contracts

-

War imperils rare vultures' yearly odyssey to the Balkans

-

Russian border city shrugs off Baltic fears of attack

Russian border city shrugs off Baltic fears of attack

-

Bitter church row divides Armenia ahead of elections

-

India hikes fuel prices as Middle East war strains supplies

India hikes fuel prices as Middle East war strains supplies

-

Injured Mitoma fails to make Japan's World Cup squad

-

Malaysia PM says not opposed to fugitive financier's bid for pardon

Malaysia PM says not opposed to fugitive financier's bid for pardon

-

Passenger from hantavirus cruise quarantines on remote Pitcairn Island

-

Duplantis kicks off Diamond League season in China

Duplantis kicks off Diamond League season in China

-

Arsenal scent Premier League glory

-

Russia pummels Kyiv, killing at least 24 and denting peace hopes

Russia pummels Kyiv, killing at least 24 and denting peace hopes

-

Rare South-North Korea football match sells out in 12 hours

-

Six hantavirus cruise passengers land in Australia

Six hantavirus cruise passengers land in Australia

-

Markets wait on Trump-Xi summit, Seoul hits record

-

Solomon Islands elects opposition leader Matthew Wale as PM

Solomon Islands elects opposition leader Matthew Wale as PM

-

Football: 2026 World Cup stadium guide

-

Hearts must run Celtic gauntlet to claim historic Scottish title

Hearts must run Celtic gauntlet to claim historic Scottish title

-

All at stake for Bundesliga relegation battlers on final day

-

Trump traded hundreds of millions in US securities in 2026

Trump traded hundreds of millions in US securities in 2026

-

Can World Cup fuel North America's soccer boom?

-

Bulgaria's pro-Russians seek place after Radev win

Bulgaria's pro-Russians seek place after Radev win

-

Canada's Cohere embraces 'low drama' amid AI giant tumult

ChatGPT to get parental controls after teen's death

American artificial intelligence firm OpenAI said Tuesday it would add parental controls to its chatbot ChatGPT, a week after an American couple said the system encouraged their teenaged son to kill himself.

"Within the next month, parents will be able to... link their account with their teen's account" and "control how ChatGPT responds to their teen with age-appropriate model behavior rules," the generative AI company said in a blog post.

Parents will also receive notifications from ChatGPT "when the system detects their teen is in a moment of acute distress," OpenAI added.

The company had trailed a system of parental controls in a late August blog post.

That came one day after a court filing from California parents Matthew and Maria Raine, alleging that ChatGPT provided their 16-year-old son with detailed suicide instructions and encouraged him to put his plans into action.

The Raines' case was just the latest in a string that have surfaced in recent months of people being encouraged in delusional or harmful trains of thought by AI chatbots -- prompting OpenAI to say it would reduce models' "sycophancy" towards users.

"We continue to improve how our models recognize and respond to signs of mental and emotional distress," OpenAI said Tuesday.

The company said it had further plans to improve the safety of its chatbots over the coming three months, including redirecting "some sensitive conversations... to a reasoning model" that puts more computing power into generating a response.

"Our testing shows that reasoning models more consistently follow and apply safety guidelines," OpenAI said.

S.Keller--BTB